From TVOF to the Future: Scientific Policies and Post-Digital Humanities. A Philologist’s Point of View

As TVOF is finishing soon and we are completing our outputs, in September 2020, I moved back to Italy to work at the Scuola Superiore Meridionale in Naples. I am starting a new project on Judeo-French historiography centered on the first critical edition of the Livre by Moses ben Abraham, a mid-13th century compilation based on Hebrew sources. My experience with TVOF is having an incalculable impact on this new enterprise. In this post, I would like to share some reflections on how TVOF and other similar projects are training a generation of post-digital textual scholars and on the challenges our generation is facing.

Vassar College (Poughkeepsie, NY), 1987: the international and interdisciplinary team responsible of the intellectual foundation of the Textual Encoding Initiative (TEI), the set of encoding guidelines which allows the durability, portability and flexibility of digitally edited texts. Image taken from: https://tei-c.org/about/history/ (©CC BY 3.0).

The post-doc with TVOF was my first research job: I joined the team in January 2016, just a few months after getting my PhD. Before that, I had been initiated into the Digital Humanities via the Dixit-MMSDA 2014 Summer School, hosted by King’s College London and the University of Cambridge and organized by the Digital Scholarly Editions Initial Training Network. I was part of a cohort of PhD students and early career researchers who had begun to explore together the world of digital editing under the supervision of enthusiastic and dedicated scholars. I remember our exchanges, during and after the Summer School, about the possibility of pursuing a career in digital scholarship as different and alternative to traditional, pre-digital humanities. This dilemma was not based on the assumption that the two were different theoretically; on the contrary, it was very clear to us that they were not. But we were well aware that the two practices required very different skills at an advanced level. We asked ourselves which skills we wanted to get, and how to get them during the post-doc. My view was that if I had to choose, I would have opted for a job on a traditional, non-digital project; I felt that I still had a lot to learn in that field. But I really hoped not to face that kind of dilemma. In the end, I was very lucky. Joining the TVOF team, I had the opportunity to work on a digital project with a strong traditional component. Over the past four years, we have been engaged both in the design of the digital edition of the Histoire ancienne and in solving the problems concerning its text and its tradition that first had been formulated by 19th-century scholarship and that had not yet found a solution. In the meantime, the panorama of research in the humanities was changing fast.

Nowadays, digital and pre-digital humanities do not appear to me as two alternative and separate fields. On the contrary, digital methodologies have filtered into research in the humanities so deeply that every research project, whatever its methodology, can be considered post-digital. Even the most determined opponents of the digital (if any exist) have to face the fact that their own works, printed in sturdy paper books, are based on digital tools (such as dictionaries, corpora, editions, secondary literature published online) and that their functionality depends also on the maintenance and accessibility of those tools. From the point of view of textual scholarship, digital editing is now an option that is more easily accessible and often cheaper than publication as a printed book. More importantly, a digital component is often crucial in gaining external research funding. More and more often, going digital is less free choice than a strategy adopted in the attempt of complying with local, national and international funding policies.

This compliance is not opportunistic. On the contrary, researchers in the humanities share the views that originally inspired these policies: the outputs’ accessibility to the widest possible audience (regardless of their economic resources) and the possibility of a transversal readership including scholars in other fields and people with limited or no familiarity with scholarly communicative conventions and language. This second aspect, outreach, plays a crucial role in the survival of disciplines without a technological impact such as the humanities. The current social and economic emergency and the increasing competition for funding will probably put our generation more and more frequently in the position of privileging the widest reception of our work over the completeness of the result and the refinement of the methodologies, two elements that normally result in the delaying of publications and in a certain esoteric character of the products.

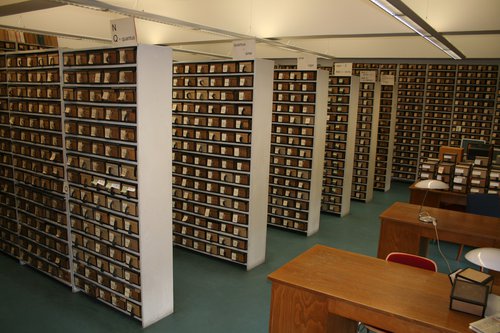

The Zettelarchiv of the Thesaurus Linguae Latinae at the Bayerischen Akademie der Wissenschaften in Munich. The dictionary is printed in several volumes, but from 2019 it is available in PDF format on the TLL website. Photo by N. P. Holmes - Own work (©CC BY 3.0)

The latter element is crucial. In the context of our ‘inexact’ sciences, methodology is a language in which some experiences and judgements about these experiences are recalled and communicated. A critical edition (no different from a dictionary entry or a linguistic analysis of an ancient text) is a discourse through which someone who managed to know the sources as intimately as possible tries to provide a picture based on their presumptions and interpretations. The referents of this language are not objects or numerical relations but educated impressions. That is why the humanities speak (and write) many esoteric languages, and communication with the outside world, but also between different areas of the humanities and between different national and subnational traditions, is complex. The broader social usability of our outputs comes into tension with these facts at least twice: when we scholars are asked to judge a project outside our fields and conceived in other scientific traditions, and when we expect that the products will be easily accessible by non-specialists. This tension is not good or bad in itself, but acknowledging it is important.

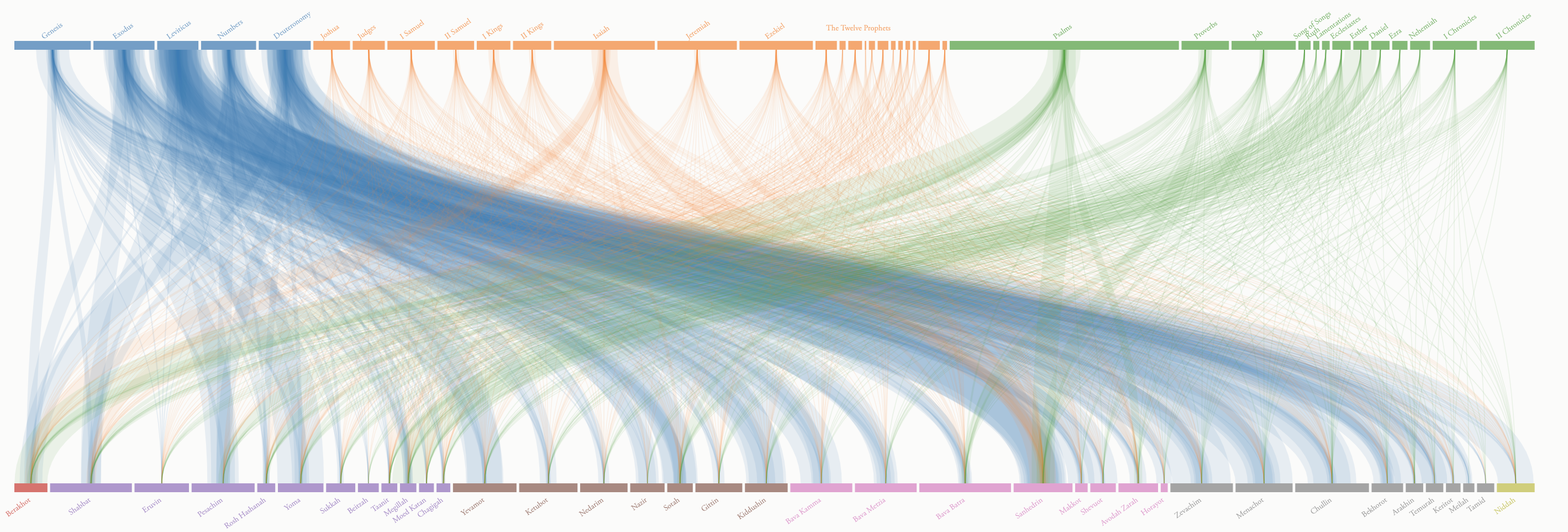

The visual tool to explore the textual connections between the Talmud and the Tanakh (the Hebrew Bible) from the page https://www.sefaria.org/explore. Sefaria: A Living Library of Jewish Texts is a born-digital archive of the foundational texts of the Hebrew tradition. It is developed and maintained by a non-profit organization founded in 2011 by the author Joshua Foer and Google alum Brett Lockspeiser.

In the pre-digital humanities, each methodology and local tradition designed its own typologies of outputs. This process always happened in the interplay between the general intellectual climate and local economic structures. Even in the case of the printed book, the possibility of printing multi-volume editions, with a complex and articulated apparatus and a comprehensive commentary depended on the existence of an economic structure able to channel the necessary investments. So, the existence of economic constraints is not something new. What is new is the explosion of digital communication and the commonly-held assumption that this technology is making the humanities public and their audience wider.

In many cases, from the point of view of scholars – and particularly those early in their career – the translation of our scientific discourses from specialistic/academic to interdisciplinary/public runs the risk of being driven mostly by the circumstances and may lack planning. This can result in the loss of knowledge and methods that we may not want to lose. In history loss is inevitable, but it is sometimes possible to choose what to preserve. So, the most relevant questions to me are now these: are we really getting something in exchange? Are we actually going interdisciplinary and public? Do the investments in producing, preserving and advertising digital scholarly products designed for a wider audience actually result in a more frequent exchange between disciplines and in the involvement of the general public?

The servers of the digital library Internet Archive. From Brewster Kahle and Ana Parejo Vadillo, ‘The Internet Archive: An Interview with Brewster Kahle’, in 19: Interdisciplinary Studies in the Long Nineteenth Century 21 (2015) (©CC BY-4.0).

Today we are still in the ‘construction’ phase of post-digital knowledge, but we are starting to collect a critical mass of data concerning the human and financial costs of our experiments, their rate of success, the correlation between particular choices and the actual public value of the project, and the correlation (if any) between this value and the scientific progress made. From the scientific point of view, we can begin to evaluate the efficiency of the transition, asking if our projects are actually more ambitious than the pre-digital ones and if the conditions provided by current policies allow the fulfillment of our scientific objectives. As for outreach, we may discover that the investments are more efficient if separated from those for research and managed by professionals, or that they create more value when integrated in education via the school system.

Digital humanities projects can evaluate their own impact on scholarly and non-scholarly audiences by looking at data such as web traffic and activity on social networks. Yet, if these parameters show how dedicated and noisy the group of the already engaged people are, they may say nothing about the actual outreach. This would need to be assessed via a different type of data collection, asking who was reached and what they achieved with the new knowledge or tools. We would perhaps discover that certain methodologies ‘reached’ only a limited audience but gave them powerful tools and intellectual agency; or that sometimes methods perceived as old-fashioned turned out to be still scientifically functional and could still produce outputs that enhance research in emerging or different fields. This kind of reality check, costly in terms of time and resources, has to be done primarily at the level of the policy makers. But without this we run the risk of tragically underrating the impact of digital tools and losing any ability to exploit their full potential for producing true research and deep, new knowledge.